Anyline Mobile Scanning Technology

Easy Adaptability with Mobile OCR Modules

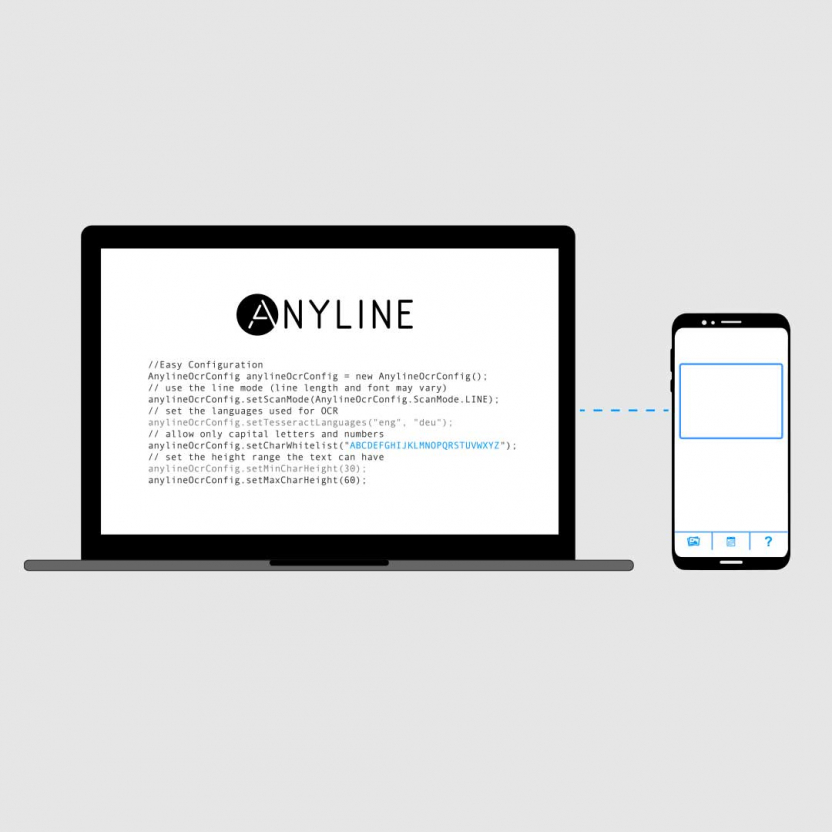

Anyline lets you add OCR scanning capabilities to your mobile app or website in an instant. No prior computer vision experience is required to embed and deploy the Anyline SDK.

You can take full advantage of mobile scanning technology by configuring which parameters you scan. Adjust the mobile OCR SDK to scan different font sizes, font layouts, character sets and colors.

The Anyline SDK also comes with preconfigured OCR implementations. These help you to take your first steps in mobile scanning as soon as possible.

Full Cross-Platform Support

Anyline runs on a C++ core, ensuring easy integration of our mobile scanning technology on all major mobile platforms. You can add the Anyline SDK to your iOS and Android apps.

The Anyline SDK also runs on devices that are part of the Windows Universal Platform (UWP). Integration of mobile scanning with Cordova and Xamarin plugins or via React Native is ensured as well.

You also have the option of integrating mobile OCR scanning with your apps for smart and augmented reality (AR) glasses. Anyline is supported on Google Glass, Vuzix, Epson and many more leading smart and AR glasses.

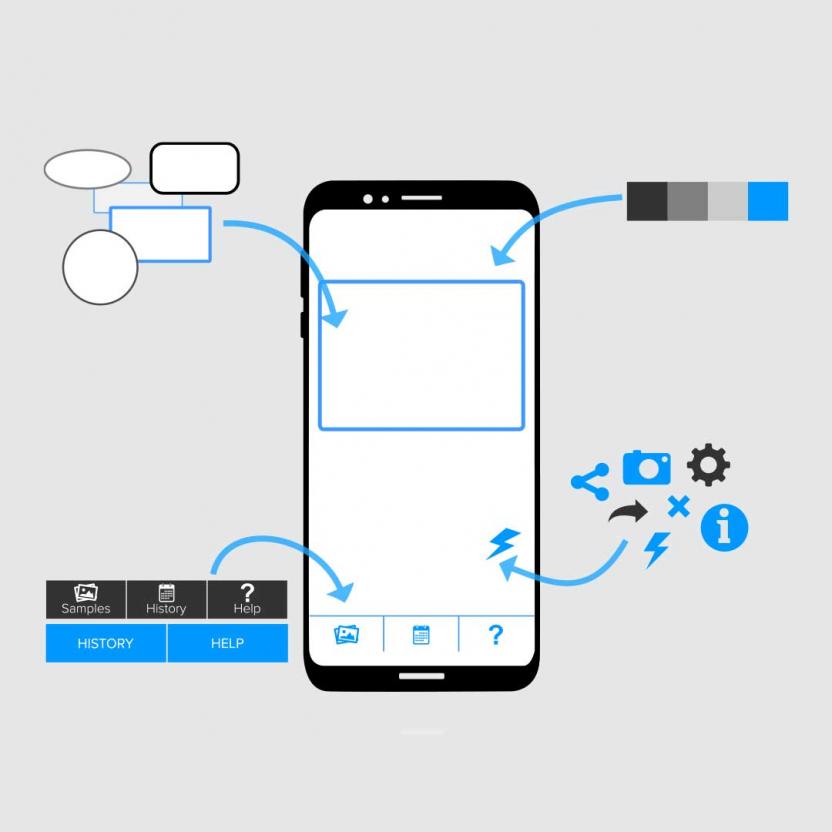

Complete UI Customization

Anyline offers mobile scanning technology with the highest level of performance, but is easy to customize and integrate with your user experience as well. You maintain full control of the look and feel of your mobile app or website. Add feature highlighting, loading bars, or logos to your implementation as you wish.

The Anyline SDK includes configurable parameters so you can start applying computer vision and mobile scanning technology to your use case as soon as possible. Visual and haptic feedback as well as audio features are also included to make your user’s mobile scanning experience as easy and simple as possible.

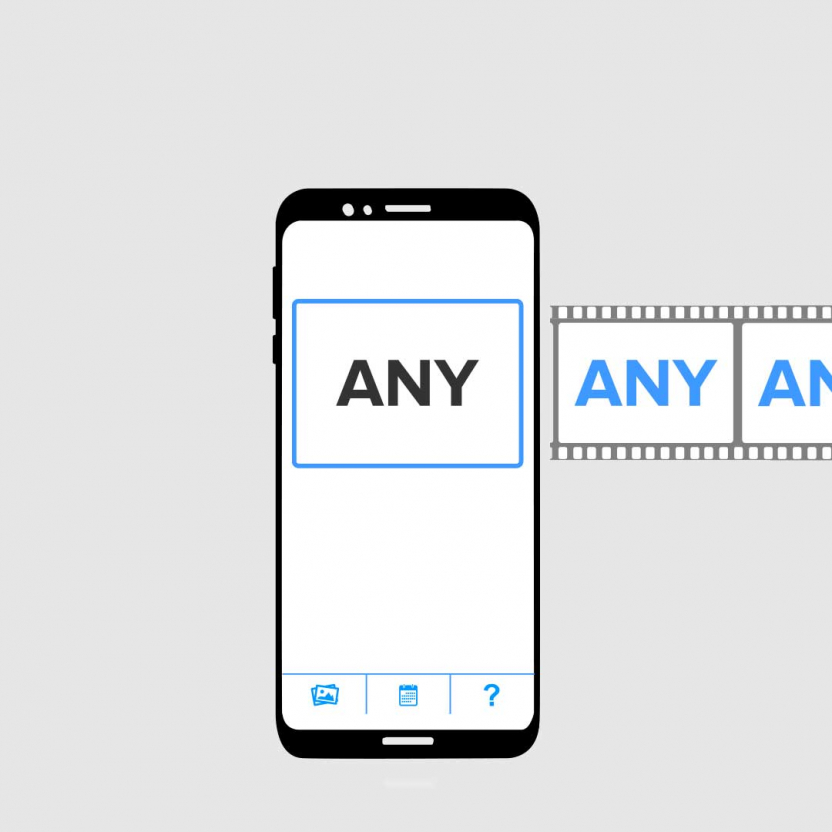

Real-Time Recognition

Anyline’s mobile scanning technology processes and extracts information from your device’s video stream. This near real-time performance recognizes values within milliseconds, making it seem instant in the hands of your users.

There’s no need to take a photo of your scan target, just point your device at it and let the video camera of your mobile device do the work. Anyline processes multiple frames from your video stream to detect and read your scan target. Once the mobile scanning software knows what it’s looking at, it transforms the data into digital output.

By having access to more than one camera frame image, our mobile scanning technology is able to deliver scan outputs with high accuracy.

Full Offline Functionality

You can perform scans in any location using Anyline’s mobile scanning technology, thanks to the offline functionality of our mobile SDK. This makes it the perfect tool for scanning data in locations without signal, like cellars, tunnels, underground carparks or remote areas.

This offline functionality also makes Anyline a secure tool for scanning sensitive personal or financial data. None of your scan data needs to be sent to external cloud services for processing or storage. All scans are performed and stored on your mobile device until you decide to upload or transfer them to your own server backend or drive.

The power of Machine Learning

Imagine if you could harness the power of AI to build a custom mobile scanning solution that never stops learning. That grew smarter with every scan you made. That is the future of our scanning solutions. We want to make AI, machine learning, and computer vision accessible to everyone.

Not only that, we want to make sure that experience is as simple as possible for our users. That is why our AI solution is automated – enabling you to optimize your scanner simply by using it. This technology will allow every customer to create custom solutions to their exact specifications.