Computer Vision Meetup – July 2020: Safe & Secure Shared AI (S3AI)

It has been a long time since the Computer Vision Meetup Vienna group has come together! Due to the ongoing corona crisis, our group of AI, machine learning and computer vision enthusiasts have unfortunately not been able to meet in person, but this week, we gathered in the virtual realm to listen to an outstanding presentation of Natalia Shepeleva about Safe and Secure Shared AI by Deep Model Design.

Natalia is working as a Researcher & Senior Data Scientist at the Data Analysis Systems (DAS) project of the Software Competence Center Hagenberg (SCCH), and we are very happy that she shared her expertise and insights into neural network quality ratings & neural network architectures with us.

In this blog, we’re sharing a synopsis of Natalia’s presentation, and if you’d like to dive a bit deeper, you can download her full slides here: “Security and Safety for Shared Artificial Intelligence” (PDF)

Data Analysis Systems & Knowledge-Based Vision Systems

Natalia started her presentation with overviews of the Software Competence Center Hagenberg as well as the “Data Analysis Systems” (DAS) & the “Knowledge Based Vision Systems” (KVS) projects.

The development and improvement of methods for automated analysis of data, like e.g. sensor data, for the purpose of knowledge extraction, model refinement & optimization of various processes, represent the core areas of DAS. This was underlined by a few use cases and real life applications in smart maintenance and production.

The main research areas of the KVS project, on the other hand, are the development & improvement of methods for automated analysis of spatio-temporal data from images or videos, which can be applied to use cases in security & surveillance, as well as in industrial settings for quality inspection purposes.

Security and Safety for Shared Artificial Intelligence

Natalia went on to explain the focus areas of the Safe and Secure Shared AI (S3AI) project. The S3AI project focuses on the research of collaborative deep learning and deep model designs are also a primary module of the SCCH in its capacity as K1 center of the Austrian COMET technology funding program.

S3AI covers various aspects of deep learning research regarding the development of a new collaborative AI architecture, innovative mathematical methods for confidentiality & adversarial attack protection, as well as the actual application of deep learning for the secure usage of unseen data in industrial IoT & healthcare environments.

Quality Ratings of Neural Networks with ReLU Code Space

Following these interesting introductions into the various projects and research goals, Natalia went a bit deeper into one of her research papers, covering the area of “Rectified Linear UnitS” (ReLU) code space, which investigates the geometry behind the different neural networks.

NEURAL ARCHITECTURE SEARCH (NAS)

Starting this endeavour with a brief look into aggregated quality indices via Neural Architecture Search (NAS), in which 5 million models were trained & evaluated on CIFAR-10 datasets, as well as looking on their own smaller example of NAS done via VGG16, Natalia showed the audience a comparison of the accuracy of different models with differing learning rates and the challenge of picking the right model without further research on understanding what happens within the models.

POLYHEDRAL BODIES OF RELU NETWORKS

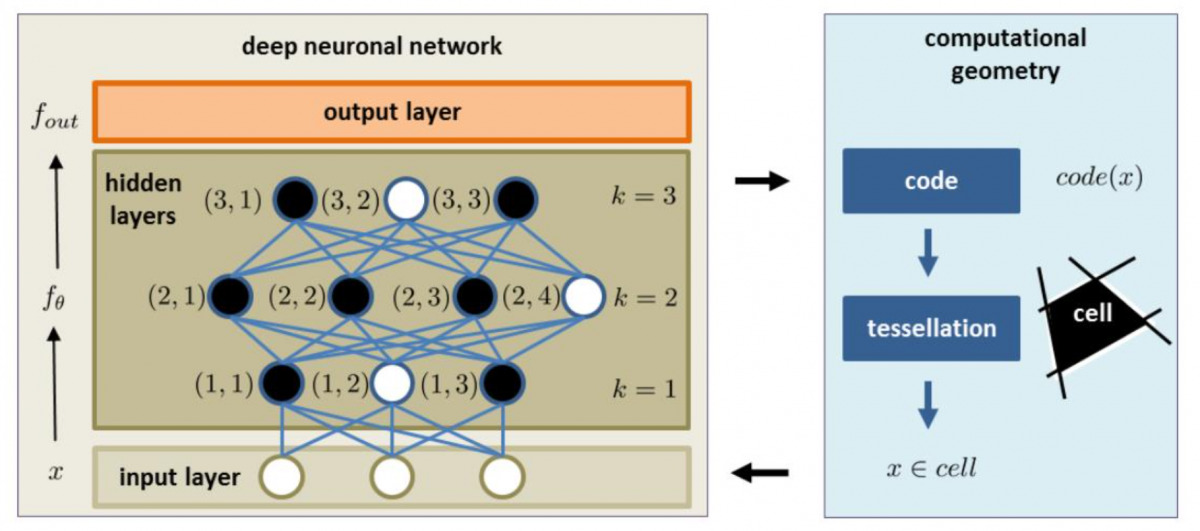

Trying to binarize the n-dimensional space of hidden layers & input layers to bring them into a computational geometric form might be a way to come closer to solving these kinds of challenges.

DUAL REPRESENTATION & ISOMETRY THEOREMS

Based on the individual code sets of all inputs combined, it’s possible to compute a hamming distance – the difference between binary codes– which makes it possible to have a dual representation theorem of the code space and the side of the equivalence classes (cell, defined by tesselation).

The aggregated ReLU code space of all input data makes it possible to calculate the adjacency of metric space between the measured metrics, or in other words, it enables us to look at how the input reflects the actual output decisions.

VGG16 & AUTOENCODER EXPERIMENTS

To take a closer look at this, Natalia & her team at DAS designed 3 experiments.

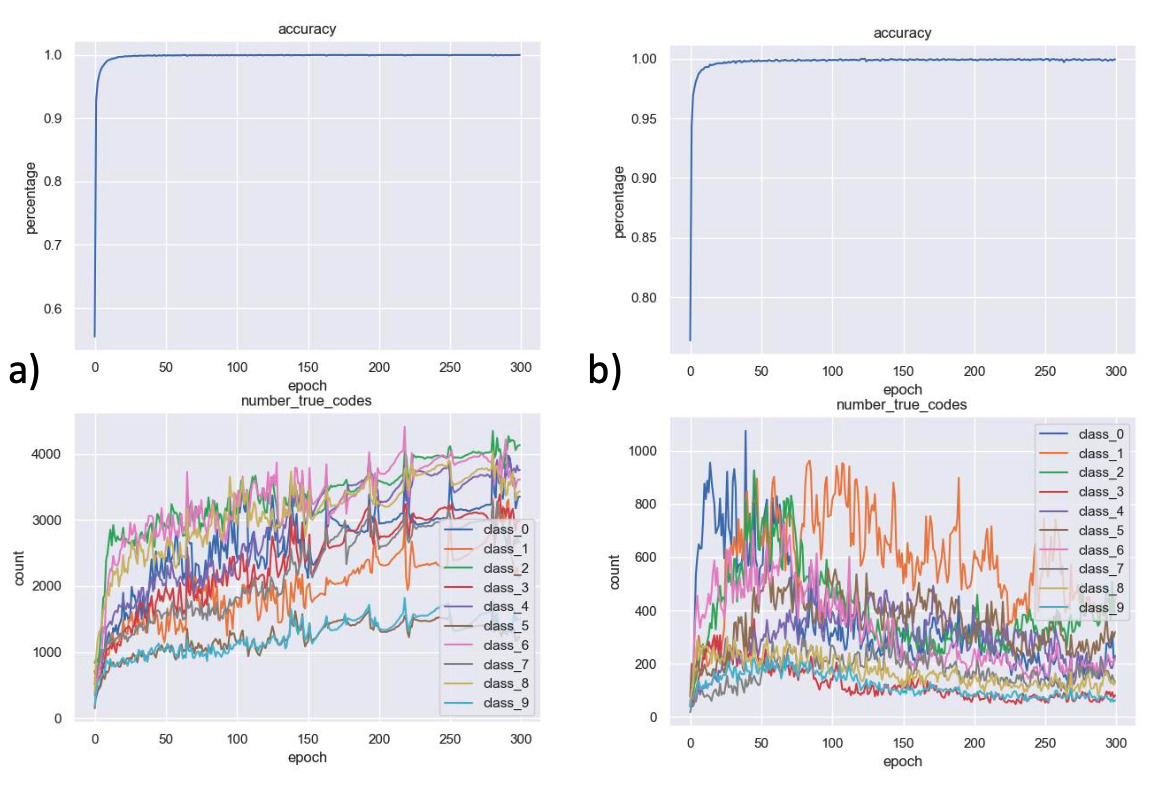

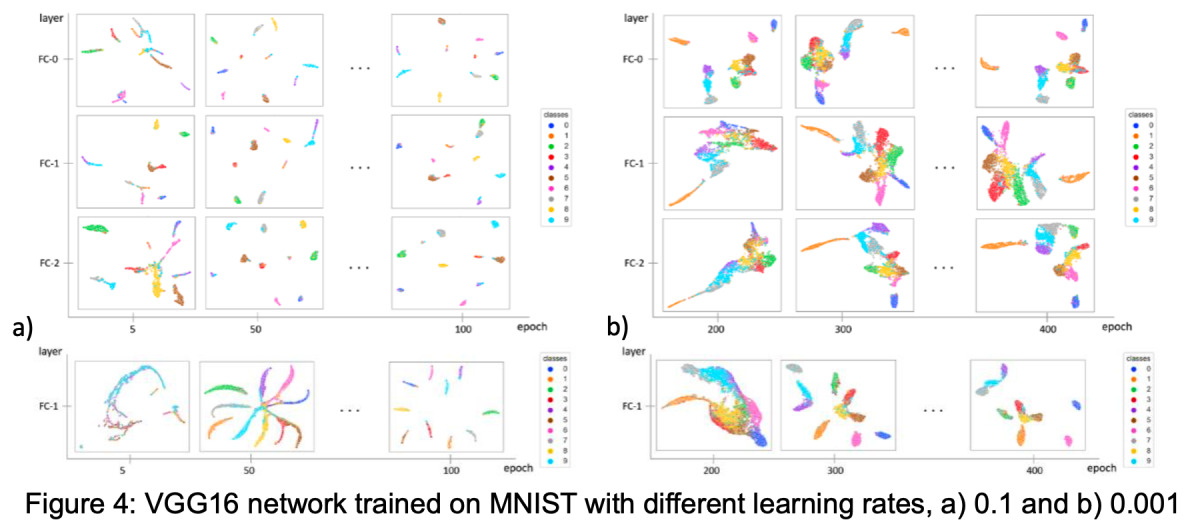

In the 1st experiment a VGG16 network was trained on MNIST with different learning rates, leading to an unexpected and rapidly changing evolution of the number of cells in the results, which is still baffling the researchers involved and a matter of current research.

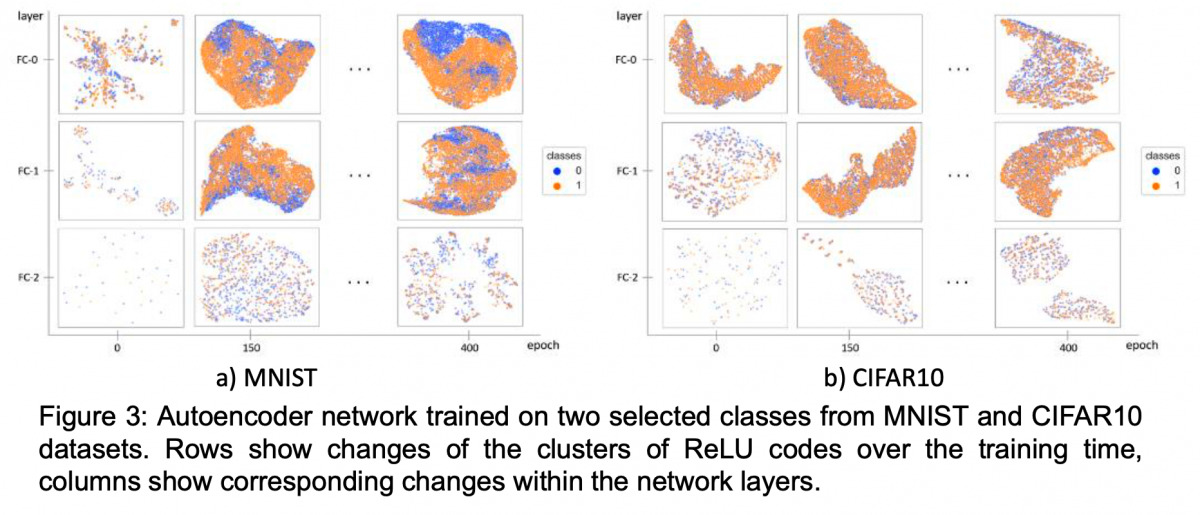

In the following 2nd experiment an Autoencoder network was trained on classes from MNIST & CIFAR-10 datasets. Displayed in UMAP clusters, showing the changes of clusters in ReLU codes/cells over training time and the corresponding changes in the network layer, the results indicate distinct differences between the models – from the first layer on – but with progressing time, some similarities between the models occur as well.

In the 3rd experiment, a VGG16 network was also trained on MNIST with different learning rates and a UMAP clustering was made to take a closer look on how different learning rates have an effect on the separation of cell clusters over training time. This resulted in some strange behavior in regards to the bigger gradient / learning rate, giving a better separation while it might not give the best accuracy compared to the other model.

Based on these experiments and the ongoing research at DAS & SCCH, Natalia pointed out that one of her goals is to to continue to find ways to identify how and which networks are the best, what the underlying factors for their performance are and to discover how to make different neural network models more accurate, more resistant to adversarial attacks and how to combine this information and knowledge to merge the different realms of data.

Join the Computer Vision Ranks!

Want to present your project in AI, computer vision, deep learning or machine learning? You know someone, who would like to share some experiences & knowledge among fellow tech-enthusiasts? Join our ranks in the Computer Vision Vienna group on Meetup!

Just message us there or go the old-fashioned way by contacting us via email – we will get in touch with you asap & reserve you a sweet spot in one of our upcoming meetups. Looking forward to meeting you! 🙂